Legibility Kills What It Measures

In the 18th century, German foresters invented scientific forestry. They looked at a messy, diverse forest and saw inefficiency. Old trees, young trees, deadwood, underbrush, species with no commercial value. They cleared it all and planted Norway spruce in straight rows, evenly spaced, same age, same species. The forest became legible. You could measure it, manage it, predict its yield with precision.

For one generation, it worked brilliantly. Yields surged. Then the forest began to die. The complex undergrowth had been cycling nutrients, retaining moisture, hosting the insects that pollinated the canopy and the fungi that fed the roots. The foresters had not simplified the forest. They had destroyed the system that kept the forest alive while keeping only the part they could see.

James Scott tells this story in Seeing Like a State to illustrate a pattern that recurs wherever central authorities impose legibility on complex systems. The pattern is simple: make the illegible legible, optimize what you can now see, and lose what you couldn’t see but depended on.

#I.

Legibility means making something readable from above. A state needs legible citizens (permanent surnames, census records, standardized addresses) to tax and conscript them. A manager needs legible workers (KPIs, time tracking, performance reviews) to evaluate them. The moment you do this, you flatten the thing a little. The record is useful, but it is not the person.

Michael Polanyi called the missing part the tacit dimension. We know more than we can tell. A master craftsman cannot fully articulate what makes a joint right. A good doctor reads a patient’s face before looking at the lab results. A trader feels the market shifting before the data confirms it. This knowledge is real, but it was learned through practice rather than instruction. It lives in the body, in habits, in pattern recognition trained over thousands of repetitions. You can write some of it down, sometimes a lot of it, but not all of it, and the part that refuses to fit cleanly into a system is often the part doing the real work.

#II.

Goodhart’s Law states that when a measure becomes a target, it ceases to be a good measure. Most people know the shallow version of this: the metric gets gamed. Teachers teach to the test. Researchers chase citations instead of doing risky work. Managers optimize the quarter because that is the evaluation cycle they live inside.

But there is a deeper version. Sometimes the measure does not just get gamed. It changes the institution so thoroughly that the old aim starts to look naive, sentimental, or inefficient. Schools measured by test scores do not merely game the scores. The slow, hard-to-measure work of developing judgment and curiosity loses its place in the schedule. The same thing happens in research once citation count becomes a career filter, and in public companies once the quarter becomes the only clock that matters. At first the metric is a proxy. After a while it becomes the institution’s memory of what the work is for. The older purpose survives only as rhetoric, then eventually not even that.

#III.

Scott’s central examples are states. He shows how Soviet collectivization, Brasilia’s urban planning, and Tanzanian forced villagization all followed the same pattern. A planner looked at a complex, functioning system, decided it was disorderly, imposed a grid, and watched the system collapse.

Jane Jacobs saw the same thing in cities. A neighborhood that looks chaotic from above (mixed uses, irregular streets, buildings of different ages) is often deeply functional at ground level. The bodega owner watches the street. The mix of commercial and residential keeps foot traffic at all hours. Old buildings provide cheap space for new businesses. Children have places to linger. The street keeps some of its own memory.

When Robert Moses built highways through these neighborhoods, he was not failing to see the order. He was seeing a different kind of order, one that could be drawn on a blueprint and measured by traffic throughput. Once that order wins, the losses show up indirectly: fewer small businesses, weaker street life, less trust, less casual supervision, fewer reasons to stay. What disappears is hard to defend precisely because it was never formalized in the first place.

#IV.

Every previous technology made specific things legible while leaving vast territories of human life in the dark. The printing press made ideas legible but not the process of thinking. Accounting made finances legible but not the judgment behind a deal.

AI is different. It reaches further into the gray zone where people used to rely on feel, memory, and accumulated judgment.

You can see this first in domains where experienced people have been carrying around pattern recognition they could not fully explain. In software, the knowledge that distinguished a staff engineer from a mid-level one (the sense for what will break, the instinct for where complexity hides, the ability to spot brittle code before it fails) becomes much easier to expose, compare, and distribute once a model can read the whole codebase at once.

Writing and taste shift in a similar way. The structural moves that distinguished one writer from another become parameters in a model. What used to look like voice, something formed slowly and unevenly over years, starts to look like a set of reproducible decisions. Recommendation systems do something similar to taste. The slow, private process of liking the wrong thing, getting bored, circling back, changing your mind, stumbling into something difficult and learning to love it, gets compressed into a profile that updates in real time.

Strategy is next. Once a system can model your market, simulate plausible competitors, and generate options faster than you can think through them, the advantage that once belonged to the person who had spent decades in an industry starts to thin out. The unwritten map is still worth something, but less of it stays private for long.

#V.

The hard question is whether this destroys tacit knowledge or mostly democratizes it.

Sometimes the answer is plainly positive. The senior engineer’s instinct gets encoded and shared. The doctor’s clinical eye gets distributed to clinics that would never have had that level of expertise. Craft that once required decades of apprenticeship becomes easier to learn. Real barriers fall, and some of those barriers deserved to fall.

But I do not think the risk is distributed evenly. In medicine, debugging, or rote analysis, making hidden expertise more available may be an unambiguous gain. In craft, judgment, taste, and long-horizon strategy, the trade may be harsher. Some of that knowledge was tacit for a harder reason. The master’s sense for a joint is not a rule waiting to be extracted. It is the residue of ten thousand joints, each slightly different, each teaching something that words cannot capture. Replace this with an algorithm and you get something that looks similar but behaves differently at the edges, which is exactly where tacit knowledge earns its keep.

Both things are true, but not in equal measure. Some tacit knowledge was just unexploited information, waiting for a sufficiently powerful system to extract it. But some of it was constitutively illegible. It existed only as a pattern in a body, a culture, an institution. Formalizing it does not preserve it. It produces a simplified copy that can fill the same niche for a time, but the original slowly loses the conditions that made it possible.

#VI.

Legibility reaches further than measurement. Friction is a kind of protective opacity: the difficulty of getting what you want is part of what prevents wanting from collapsing into consumption. The inner self is opaque in a related way: it is the part of you that has not been flattened into a readable surface for outside systems. Formation is opaque too. It cannot be judged well through snapshots because what matters only shows up over time. Force it into short, legible evaluation cycles and the same pattern returns. The measure replaces the thing. The thing weakens.

When AI makes the self legible (behavioral prediction, emotional modeling, preference extraction), it does not merely observe the self. It begins to replace the function the self was performing. If an external system knows what you want before you do, the inner process of forming a want loses its purpose. The self does not die dramatically. It becomes unnecessary, the way a muscle atrophies when a machine does its work.

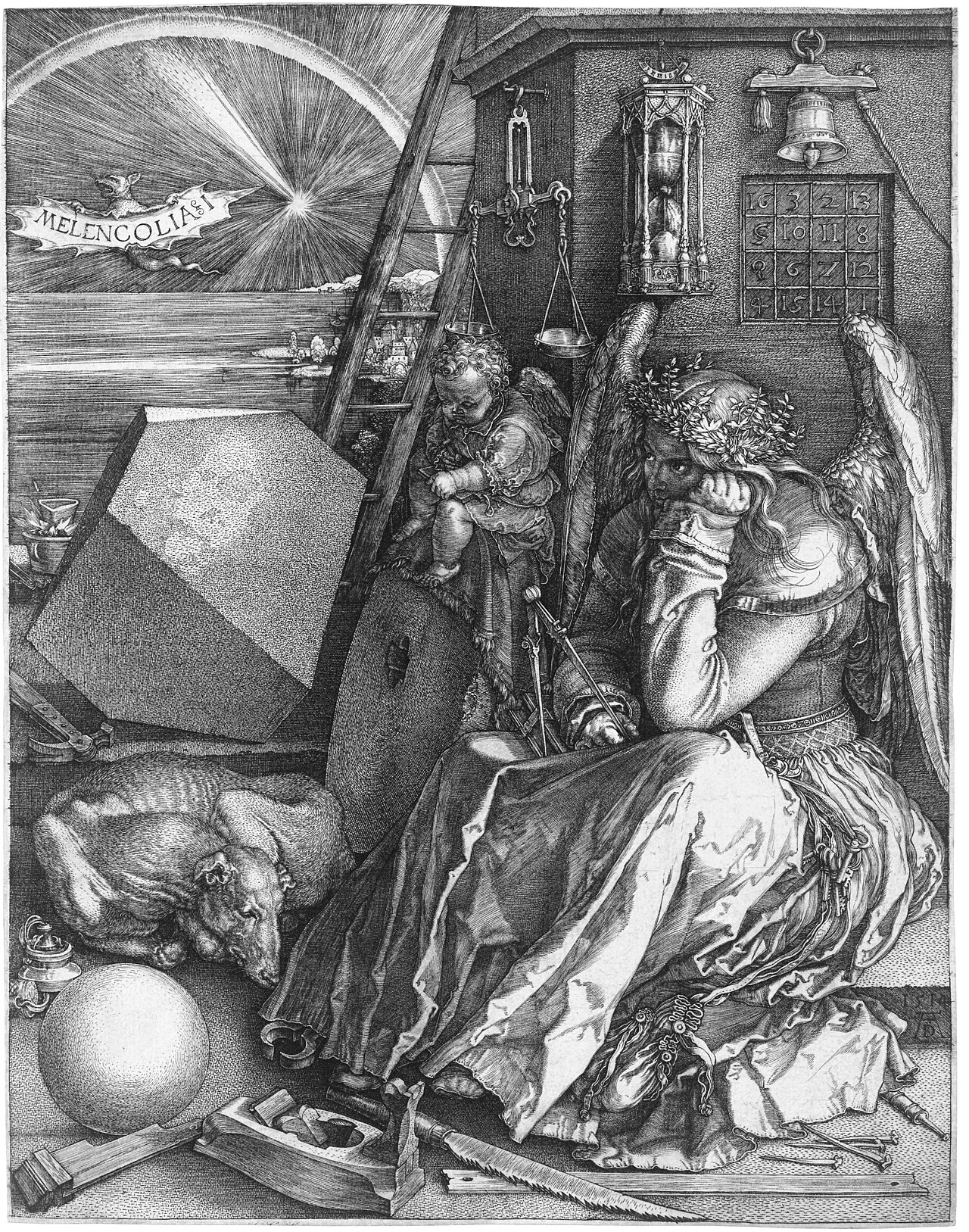

The German foresters did not hate the forest. They wanted to optimize it, to bring it under rational control, and for one generation they succeeded by removing everything they could not measure. The forest died because what they removed looked like noise only inside the measurement system they had chosen. Outside that system, it was the forest.

How much of human life has this structure? How much of what we are depends on remaining partially opaque, even to ourselves? The map is about to get very detailed. The territory may not survive the survey.